A computer aided detection framework for mammographic images using fisher linear discriminant and nearest neighbor classifier ()

1. INTRODUCTION

Breast cancer is the most common form of cancer in women; however, its early detection has proven to save lives. In the USA, 39,840 women and 390 males died due to this disease in 2010. Currently, there are more than two and a half million women living in United States have been diagnosed and treated from breast cancer [1]. The National Cancer Institute also estimates that 12.7% of women born today will be diagnosed with breast cancer at some time in their lives [1].

Today, mammography is the best method for early detection of breast cancer. Lesion size, density of breast tissue, age of the patient, image quality, and the radiologist’s skills to interpret the mammogram are the factors that affect sensitivity of cancerous tissues identification. The best known possible remedy and successful treatment for breast cancer is the early detection as it has considerably reduced the mortality rates in the past years [2]. It is hence very important for women to monitor their risk factors and maintain their periodical screening.

Radiologists failed to detect evident cancerous signs in approximately 20% of false negative mammograms [3]. The subtle difference between the cancerous and noncancerous regions is the main cause of false diagnosis. The sensitivity of mammography has been reported to improve if two radiologists examine the mammogram [4]. However, this is a costly solution and therefore other alternatives to this problem have to be investigated. Computer Aided Detection (CAD) is one of those alternatives. The CAD is a system used to assist radiologists through reading, analyzing, and then labelling the mammograms as normal or abnormal. It was reported that the cancer detection rates of a single reader with a CAD system and of two readers are similar [5].

A number of researchers have investigated principal component analysis (PCA), Fisher linear discriminant (FLD), and nearest neighbor classifier (KNN) algorithms. For instance, in [6], Hough transform, PCA, and Euclidean distance were integrated to detect abnormalities in mammograms. In [7], PCA and FLD algorithms were cascaded as dimensionality reduction modules followed by a discriminant analysis classifier. In [8], PCA, linear discriminant analysis (LDA), and probabilistic classification have been integrated to classify healthy and diseased human blood serum. In [9], PCA, LDA, and PCA-LDA were investigated as dimensionality reduction techniques for speech recognition. Results indicated that the combined PCA-LDA outperformed the individual algorithms. In [10], the performance of PCA-KNN and PCA-LDA was investigated for the face recognition problem. It was concluded that PCA-KNN outperformed PCA-LDA.

In this work, we develop a CAD system that confidently provides the radiologist a second reader opinion about mammographic images. The proposed CAD system integrates PCA as a decorrelation-based module, FLD as a dimensionality reduction and feature extraction module, and KNN as a classification module. The integration of PCA and FLD allows the CAD algorithm to use more than one Eigen vector in the Fisher domain which should improve the classification accuracy. The rest of this paper is organized as follows: section 2 presents PCA, FLD, and KNN algorithms. The proposed integrated approach is presented in Section 3. Section 4 presents the experimental results followed by the conclusions in Section 5.

2. THEORY

2.1. Principal Component Analysis

PCA is a linear transformation and a decorrelation-based technique that maps a high dimensional space into a lower dimensional space. PCA is used as a preprocessing step to improve speed and accuracy of the classification stage while decreasing its complexity. PCA transforms the data set into a different coordinate system where the first coordinate in the transformed domain, called the principal component, has the maximum variance and the rest of the coordinates have lesser and lesser variance values.

2.2. Fisher Linear Discriminant

Linear discriminant analysis (LDA) is used to discriminate between data classes and most commonly used in the two-class problem [11]. On the other hand, Fisher linear discriminant (FLD) is the benchmark for linear discrimination between two classes in the multidimensional space [11]. FLD was reported with attractive computational complexity since it is only based on the first and second moments of the data distribution [11].

FLD uses a projection matrix W to reshape the data set’s scatter matrix in order to maximize the classes’ separability. W represents the optimally discriminating features and is defined as the ratio of between-class scatter to within-class scatter. The transformation matrix W is defined as [12]:

(1)

(1)

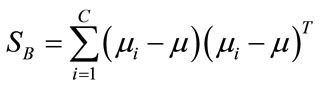

where X is the data matrix and  is the between-class scatter matrix. For a two-class problem,

is the between-class scatter matrix. For a two-class problem,  is defined as

is defined as

(2)

(2)

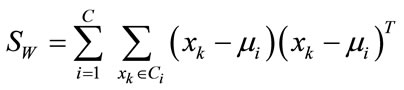

and  is the within-class scatter matrix defined as

is the within-class scatter matrix defined as

(3)

(3)

where C represents number of classes,  is the mean of samples in class i. Then, the matrix

is the mean of samples in class i. Then, the matrix

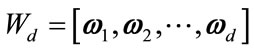

is constructed according to Equation (1). Next, the matrix  is formed by retaining d eigenvectors which is defined as

is formed by retaining d eigenvectors which is defined as  where d is less than D. The output matrix

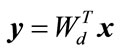

where d is less than D. The output matrix  is the projection of vector

is the projection of vector  into a subspace of d dimension.

into a subspace of d dimension.

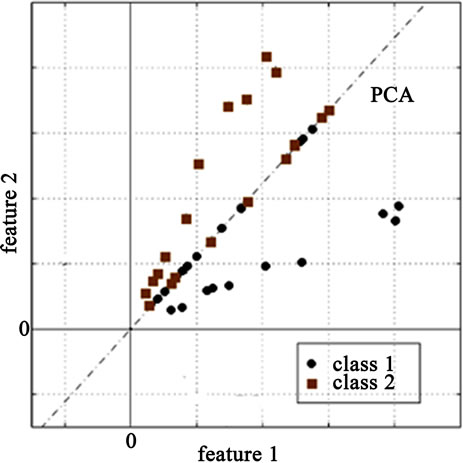

The PCA algorithm transforms the data into an Eigenspace that uncorrelates the data. However, in case of a two-class problem, the two classes are not completely linearly separable as shown in Figure 1(a) which complicates and degrades the classification phase. Therefore, FLD algorithm is applied after PCA resulting in a better between-class scatter as shown in Figure 1(b). Consequently, the classification results should be greatly improved.

(a)

(a) (b)

(b)

Figure 1. (a) The resultant data after PCA for the two-class problem; (b) The resultant data after PCA-FLD.

2.3. Nearest Neighbor Classifier

The Nearest Neighbor is a simple yet a robust classifier where an object is assigned to the class to which the majority of the nearest neighbors belong. It is important to consider only those neighbors for which a correct classification is already known (i.e., training set). All the objects are considered to be present in the multidimensional feature space and are represented by position vectors where these vectors are obtained through calculating the distance between the object and its neighbors. The multidimensional space is divided into regions utilizing the locations and labels of the training data. An object in this space will be labeled with the class that has the majority of votes among the k-nearest neighbors. The algorithm of the nearest neighbor classifier can be summarized as follows:

- In the training phase, the feature vectors and their class labels are found as  where each

where each  is a label that belongs to one of the classes

is a label that belongs to one of the classes .

.

- In the testing phase, the distance of a testing vector to the training vectors is computed and the closest training sample is chosen. Then, the testing sample is labeled according to the label of the nearest neighbor.

3. PROPOSED CAD ALGORITHM

In this section, the framework of the breast cancer computer aided detection system is developed. PCA algorithm is used as a decorrelation-based module followed by FLD as a dimensionality reduction and a feature extraction module. Finally, a KNN classifier is used to classify the testing sub-images into normal or abnormal.

Mammographic images from the MIAS database were used, which has a total 203 normal mammograms and 119 suspicious ones. A total of 144 images were cropped and scaled to 50 × 50 pixels from the database forming 72 normal and 72 suspicious sub-images. A total of 3 training sets are created. Each training set consisted of 48 sub-images: 24 suspicious and 24 normal sub-images.

The proposed CAD system consists of a training phase, testing phase, and classification phase. The following steps summarize the training phase:

- Each sub-image in the training set is converted into a column vector gk where  Then, a training matrix Gjk is formed by placing the sub-images as columns where j = 2500 and

Then, a training matrix Gjk is formed by placing the sub-images as columns where j = 2500 and

- Row-wise mean of the matrix Gjk is computed which results in a column vector A.

- A matrix Bjk is formed by repeating the column vector A number of times equal to number of the sub-images (i.e., 48).

- The deviation of each sub-image from the row-wise mean of the sub-images is calculated per Djk = Gjk – Bjk..

- The covariance matrix of Djk is computed using Eq.4:

(4)

(4)

where m is the number of rows in A (i.e., 48).

- The eigenvalues λ and eigenvectors V of the matrix Cmm are computed using the PCA algorithm according to eq.5. This result in m eigenvectors and m eigenvalues sorted in a descending order.

(5)

(5)

- The centered sub-images matrix Djk is projected onto the Eigenspace per eq.6.

(6)

(6)

- Two types of scatter matrices are used in this step. The first one is the within-class scatter matrix  representing the scatter of a single class and the second one is the between-class scatter matrix

representing the scatter of a single class and the second one is the between-class scatter matrix  representing the scatter of different classes:

representing the scatter of different classes:

where C represents number of classes,  is the mean of samples in class i where i = {1, 2}, and

is the mean of samples in class i where i = {1, 2}, and  is the mean of all samples in the training matrix. Both

is the mean of all samples in the training matrix. Both  and

and  are of dimension 48 × 48.

are of dimension 48 × 48.

- A linear transformation matrix W is computed as:

where  is the Fisher criterion that maximizes the between-class scatter while minimizes the withinclass scatter and

is the Fisher criterion that maximizes the between-class scatter while minimizes the withinclass scatter and  is the set of m Eigenvectors and m eigenvalues of

is the set of m Eigenvectors and m eigenvalues of  and

and . The transformation W is another projection into the Eigenspace

. The transformation W is another projection into the Eigenspace  such that

such that

(7)

(7)

A number of eigenvalues is retained (Nev) along with their corresponding Eigenvectors (Vfe) where the dimension of Vfe is M × Nev.

- The matrix Ymm is projected onto the Fisher linear space Zpq using the Eigenvectors Vfe as shown in Eq.8.

(8)

(8)

where the dimension of Zpq is Nev × 48.

On the other hand, the testing phase can be summarized in the following steps:

- A total of three testing sets are used where each test set consists of 48 sub-images: 24 normal and 24 abnormal sub-images.

- Let the testing set be represented as .

.

- For each testing sub-image , the difference between the sub-image and the mean of the training set A is computed using Eq.9.

, the difference between the sub-image and the mean of the training set A is computed using Eq.9.

(9)

(9)

- The difference γjk is projected onto the Eigenspace Ymm and the Eigenvectors space Vfe as shown in Eq.10.

(10)

(10)

For the classification phase, two classes are assumed: one class for the abnormal sub-images and the other one for normal sub-images. First, the Euclidean distance between the testing matrix Ppq and each column of the Fisher linear space Zpq is computed. This distance provides an accurate measure for the classification phase. Then, the nearest neighbor to the test sample is selected based on the calculated distances in the previous step. Then, the testing sub-image is assigned to the class of the nearest neighbor.

4. RESULTS AND DISCUSSION

The literature reported that number of retained Fisher values for the classification stage should be limited to be one less than number of classes [4]. However, for the two-class problem the retained Fisher values should be increased to improve the classification stage [9]. Therefore, in this work, 11 Fisher values are retained which have been determined as a result of experimental evaluation as discussed below.

Table 1 shows the results of the proposed CAD algorithm. Algorithm accuracy is defined as the ratio between number of correctly classified testing sub-images and total number of testing sub-images. A total of 72 subimages were used for the testing phase. As Table 1 indicates, the proposed CAD algorithm has classification accuracy over 91.67% in all the three test sets with average classification accuracy of 93.06%. Table 1 also indicates average false negative (FN) rate, an abnormal mammogram classified as normal mammogram, and false positive (FP) rate, a normal mammogram classified as abnormal mammogram, of 6.94% and 0%.

PCA is employed globally to uncorrelate the training data where all the principal components are retained. However, PCA uncorrelates the first few principal components in the transformed data while the rest of the components are still highly correlated. On the other hand, FLD is used as a dimensionality reduction and feature

Table 1. Classification accuracy, FP, and FN rates of the three test sets. Each set consists of 24 normal and 24 abnormal subimages.

extraction module. FLD is applied, which uses the basis provided by the PCA, to generate a new set of basis for the classification stage. FLD uncorrelates the data again by taking into account the different classes present in the data. This dual transformation into the Eigenspace uncorrelates the data two times which should greatly improve the classification results.

In testing set no. 1, three abnormal images were not correctly classified: one has architectural distortion while the others have spiculated masses. In testing set no. 2, four abnormal images were not correctly classified: three have microcalcifications while the other one has spiculated masses. In testing set no. 3, three abnormal images were not correctly classified: two have Architectural Distortions while the other one has spiculated masses. Microcalcifications are very hard to detect as they are very small and are non-palpable. Spiculated masses have irregular shapes with sharp edges and thus pose a big challenge for detection. Architectural distortions are one of the most commonly missed signs of breast cancer. Two third of cancer is related to architectural distortions that have positive margins.

The proposed CAD system uses several parameters that impact the performance and accuracy of results such as the number of selected principal components (PC), number of retained Fisher values, and number of nearest neighbors.

Figure 2 shows the impact of retaining different number of principal components on the classification accuracy for testing sets 1 to 3. These results indicate that selecting all the principal components achieves the highest accuracy. Thus, PCA is used in this work to decorrelate the data without reducing its dimensionality. Even though most of the information is contained in the first few principal components, discarding the least significant principal components may result in loss of information depending on the application.