D. VÄSTFJÄLL

Copyright © 2012 SciRes. 609

REFERENCES

Asutay, E., & Västfjäll, D. (2012). Perception of loudness is influenced

by emotion. PLoS ONE, 7, e38660.

doi:10.1371/journal.pone.0038660

Asutay, E., Västfjäll, D., Tajadura-Jimenez, A., Genell, A., Bergman, P.,

& Kleiner, M. (2012). Emoacoustics: A study of the psychoacoustical

and psychological dimensions of emotional sound design. Journal of

the Audio Engineering Society, 60 , 21-28.

Ballas, J. A. (1993). Common factors in the identification of an assort-

ment of brief everyday sounds. Journal of Experimental Psychology:

Human Perception and Performance, 19, 250-267.

doi:10.1037/0096-1523.19.2.250

Berglund, B., Hassmén, P., & Preis, A. (2002). Annoyance and spectral

contrast are cues for similarity and preference of sounds. Journal of

Sound and Vibratio n, 250, 53-64. doi:10.1006/jsvi.2001.3889

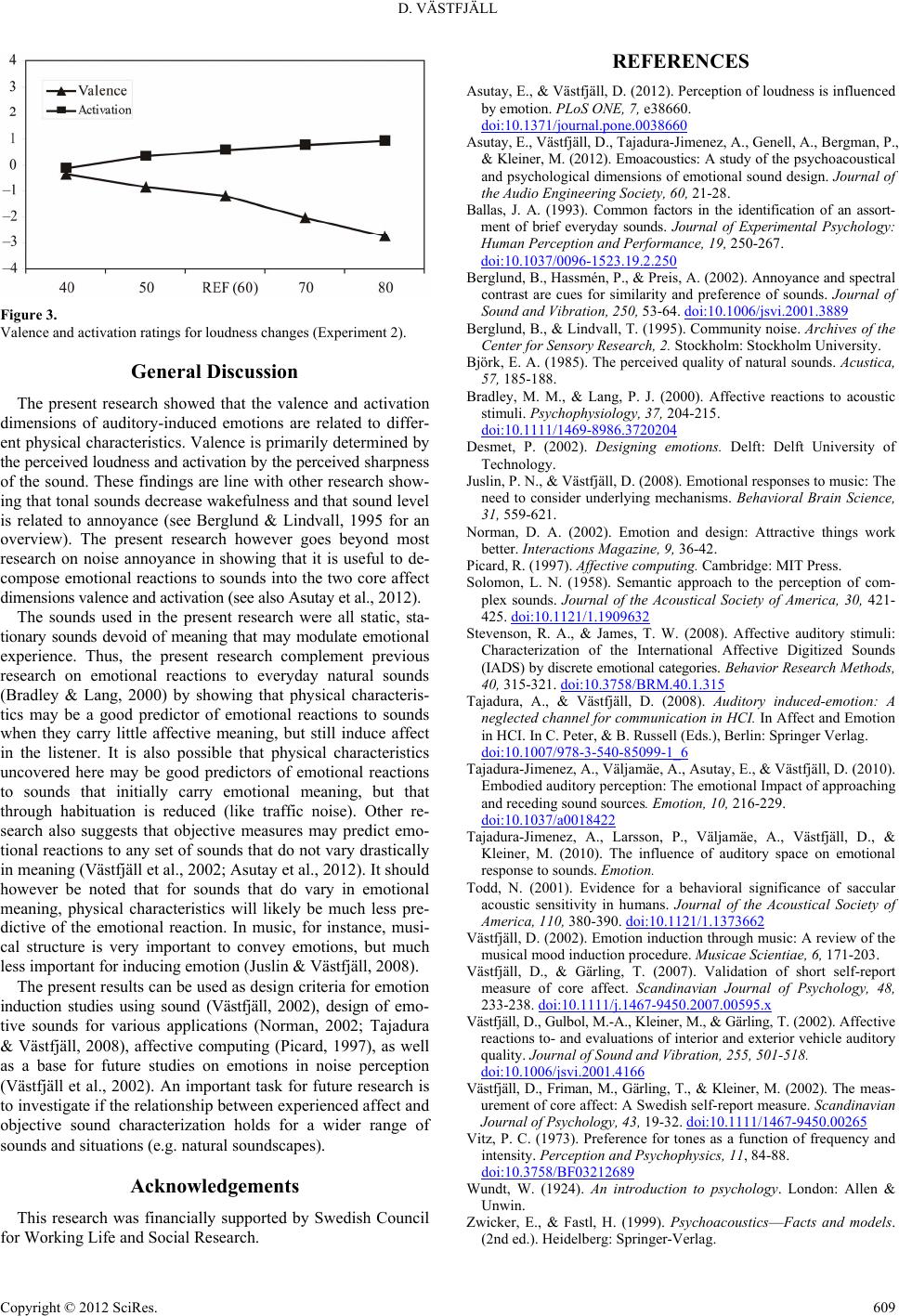

Figure 3. Berglund, B., & Lindvall, T. (1995). Community noise. Archives of the

Center for Sensory Res ea rc h, 2. Stockholm: Stockholm University.

Valence and activation ratings for loudness changes (Experiment 2).

Björk, E. A. (1985). The perceived quality of natural sounds. Acustica,

57, 185-188.

General Discussion

Bradley, M. M., & Lang, P. J. (2000). Affective reactions to acoustic

stimuli. Psychophysiology, 37, 204-215.

doi:10.1111/1469-8986.3720204

The present research showed that the valence and activation

dimensions of auditory-induced emotions are related to differ-

ent physical characteristics. Valence is primarily determined by

the perceived loudness and activation by the perceived sharpness

of the sound. These findings are line with other research show-

ing that tonal sounds decrease wakefulness and that sound level

is related to annoyance (see Berglund & Lindvall, 1995 for an

overview). The present research however goes beyond most

research on noise annoyance in showing that it is useful to de-

compose emotional reactions to sounds into the two core affect

dimensions valence and activation (see also Asutay et al., 2012).

Desmet, P. (2002). Designing emotions. Delft: Delft University of

Technology.

Juslin, P. N., & Västfjäll, D. (2008). Emotional responses to music: The

need to consider underlying mechanisms. Behavioral Brain Science,

31, 559-621.

Norman, D. A. (2002). Emotion and design: Attractive things work

better. Interactions Magazine, 9, 36-42.

Picard, R. (1997). Affecti ve computing. Cambridge: MIT Press.

Solomon, L. N. (1958). Semantic approach to the perception of com-

plex sounds. Journal of the Acoustical Society of America, 30, 421-

425. doi:10.1121/1.1909632

The sounds used in the present research were all static, sta-

tionary sounds devoid of meaning that may modulate emotional

experience. Thus, the present research complement previous

research on emotional reactions to everyday natural sounds

(Bradley & Lang, 2000) by showing that physical characteris-

tics may be a good predictor of emotional reactions to sounds

when they carry little affective meaning, but still induce affect

in the listener. It is also possible that physical characteristics

uncovered here may be good predictors of emotional reactions

to sounds that initially carry emotional meaning, but that

through habituation is reduced (like traffic noise). Other re-

search also suggests that objective measures may predict emo-

tional reactions to any set of sounds that do not vary drastically

in meaning (Västfjäll et al., 2002; Asutay et al., 2012). It should

however be noted that for sounds that do vary in emotional

meaning, physical characteristics will likely be much less pre-

dictive of the emotional reaction. In music, for instance, musi-

cal structure is very important to convey emotions, but much

less important for inducing emotion (Juslin & Västfjäll, 2008).

Stevenson, R. A., & James, T. W. (2008). Affective auditory stimuli:

Characterization of the International Affective Digitized Sounds

(IADS) by discrete emotional categories. Behavior Research Methods,

40, 315-321. doi:10.3758/BRM.40.1.315

Tajadura, A., & Västfjäll, D. (2008). Auditory induced-emotion: A

neglected channel for communication in HCI. In Affect and Emotion

in HCI. In C. Peter, & B. Russell (Eds.), Berlin: Springer Verlag.

doi:10.1007/978-3-540-85099-1_6

Tajadura-Jimenez, A., Väljamäe, A., Asutay, E., & Västfjäll, D. (2010).

Embodied auditory perception: The emotional Impact of approaching

and receding sound sources. Emotion, 10, 216-229.

doi:10.1037/a0018422

Tajadura-Jimenez, A., Larsson, P., Väljamäe, A., Västfjäll, D., &

Kleiner, M. (2010). The influence of auditory space on emotional

response to sounds. E motion.

Todd, N. (2001). Evidence for a behavioral significance of saccular

acoustic sensitivity in humans. Journal of the Acoustical Society of

America, 110, 380-390. doi:10.1121/1.1373662

Västfjäll, D. (2002). Emotion induction through music: A review of the

musical mood induction procedure. Musicae Scientiae, 6, 171-203.

Västfjäll, D., & Gärling, T. (2007). Validation of short self-report

measure of core affect. Scandinavian Journal of Psychology, 48,

233-238. doi:10.1111/j.1467-9450.2007.00595.x

The present results can be used as design criteria for emotion

induction studies using sound (Västfjäll, 2002), design of emo-

tive sounds for various applications (Norman, 2002; Tajadura

& Västfjäll, 2008), affective computing (Picard, 1997), as well

as a base for future studies on emotions in noise perception

(Västfjäll et al., 2002). An important task for future research is

to investigate if the relationship between experienced affect and

objective sound characterization holds for a wider range of

sounds and situations (e.g. natural soundscapes).

Västfjäll, D., Gulbol, M.-A., Kleiner, M., & Gärling, T. (2002). Affective

reactions to- and evaluations of interior and exterior vehicle auditory

quality. Journal of Sound and Vibration , 255, 501-518.

doi:10.1006/jsvi.2001.4166

Västfjäll, D., Friman, M., Gärling, T., & Kleiner, M. (2002). The meas-

urement of core affect: A Swedish self-report measure. Scandinavian

Journal of Psychology, 43, 19-32. doi:10.1111/1467-9450.00265

Vitz, P. C. (1973). Preference for tones as a function of frequency and

intensity. Perception and Psychophysics, 11, 84-88.

doi:10.3758/BF03212689

Acknowledgements Wundt, W. (1924). An introduction to psychology. London: Allen &

Unwin.

This research was financially supported by Swedish Council

or Working Life and Social Research. Zwicker, E., & Fastl, H. (1999). Psychoacoustics—Facts and models.

(2nd ed.). Heidelberg: Springer-Verlag.

f