A New Aware-Context Collaborative Filtering Approach by Applying Multivariate Logistic Regression Model into General User Pattern ()

Received 12 September 2016; accepted 15 August 2016; published 18 August 2016

1. Introduction

Recent researches on collaborative filtering (CF) focus on inherent information about users and items and how to recommend such relevant items to such users. Database used to build up CF algorithms is in form of rating matrix composed of ratings that users give to items. Additional contextual factors such as time, place, condition and situation existing in real world are not considered in CF algorithms. For instance, if a user prefers to watch news program in morning and movies in evening then contextual information, namely temporary information, should be aware in recommendation tasks and so, it is inappropriate to provide movies to her/him in the morning even though such movies are the most relevant to her/him.

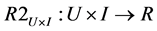

Given a training set which is a rating matrix and a user who requires recommendations, CF algorithm tries to predict rating value on items which are not rated by this user. After that CF algorithm makes a list of such items arranged in order of predictive rating values and recommends this user such list. In other words, CF algorithm constructs a predictive function R2 ( [1] , pp. 217-250) whose cross domain is the Cartesian product of a set of users U and a set of items I. The domain U × I is also called rating matrix. Co-domain of function R2 is a set of predictive ratings denoted R.

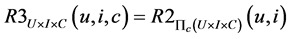

Function R2 called traditional 2-dimension (2D) mapping doesn’t consider contextual factors now and it can lack information necessary to highly accurate prediction. Suppose contextual information including location, time and companion are added to prediction process, the 2D function R2 becomes the 3-dimension (3D) mapping denoted as below:

Note that C, U × I and U × I × C represent context domain, 2D (cross) domain and 3D (cross) domain, respectively. In order words, function R3 gives recommendations to user under circumstances specified as contextual information.

Although context has many different types, we can reduce these types into three main types in order to answer three question forms: when, where and who ( [1] , pp. 224-225).

Time type indicates the time when user requires recommendation, for example: date, day of week, month, and year.

Location type indicates the place where user requires recommendation, for example: theater, coffee house.

Companion type indicates the persons with whom user goes or stays when recommendation task is required, such as: alone, friends, girlfriend/boyfriend, family, co-workers.

Contextual information is organized in two forms: hierarchical structure ( [2] , p. 1537) and multi-dimensional data (MD) model.

According to hierarchical form, the context domain C is defined as a set of contextual dimension K = (K1, K2, K3, ∙∙∙, Kn). K is represented in hierarchy structure, whose attributes Ki(s) are associated with the ascending order of fine level. For example, given attributes Ki and Kj where I < j, Kj is finer than Ki and so Ki contains Kj. It is easy to recognize that K1 is the coarsest attribute which contains all remaining attributes K2, K3 ∙∙∙, Kn. Each Ki contains values at the same level i and it can be split into finer levels. An example of contextual dimension is shown in Figure 1.

In above example, K2 = {City, Province}, K3 = (City → District, City → Suburb district, Province → District, Province → Suburb district).

According to MD form, context domain C is defined as the Cartesian product of n dimension, C = D1 × D2 × ∙∙∙ × Dn. Each dimension Di, in turn, is a set of attributes, Di = (ai1, ai2, ∙∙∙, aik). For example, suppose C has only one dimension of time denoted D1 = Time (day of week). So, the cross domain U × I × C of predictive function R3 constitutes a 3D cube: User (name), Item (book name) and Time (day of week). Each block in this cube is assigned a rating which is the predictive outcome of function R3. Figure 2 depicts a MD cube ( [1] , p. 227).

Figure 2 indicates that given user “John” (101), item “novel book” (7) and time “Sunday” (1), the predictive outcome of R3 gains the highest value 5.

There are three approaches ( [1] , pp. 232-233) to apply context into recommendation process:

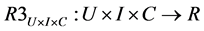

Contextual pre-filtering: Firstly, given context  is used to select user-item pairs (u, i) which are more relevant to this context, leading to obtain the aware-context cross domain U × I. After that traditional 2D function R2 is taken on such cross domain.

is used to select user-item pairs (u, i) which are more relevant to this context, leading to obtain the aware-context cross domain U × I. After that traditional 2D function R2 is taken on such cross domain.

Contextual post-filtering: Firstly, traditional 2D function R2 is used to produce the list of recommended item. After that context C is used to fine-tune this list in order to remove irrelevant items according to concrete context.

Contextual modeling: The 3D function R3 is used directly on context-aware cross domain U × I × C.

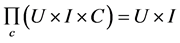

The basic idea of contextual pre-filtering is to project the 3D domain U × I × C on 2D plane, based a concrete context  so that such 3D domain is reduced to 2D domain U × I. Let

so that such 3D domain is reduced to 2D domain U × I. Let  be projection operation based on condition context c, we have:

be projection operation based on condition context c, we have:

The concrete context  which is strict projection condition can make the cross domain U × I small or sparse, causing low predictive accuracy. So generalization technique is used to make projection condition loose, namely the exact condition c is replaced by the more general condition c’. For example, the context c = “Saturday” which indicates that “Mary prefers to go shopping on Saturday” is replaced by more flexible context c = “Weekend” because she can like going shopping on Sunday if she often goes on Saturday. The general context not only expends the space of potential recommendation solutions but also improve predictive accuracy.

which is strict projection condition can make the cross domain U × I small or sparse, causing low predictive accuracy. So generalization technique is used to make projection condition loose, namely the exact condition c is replaced by the more general condition c’. For example, the context c = “Saturday” which indicates that “Mary prefers to go shopping on Saturday” is replaced by more flexible context c = “Weekend” because she can like going shopping on Sunday if she often goes on Saturday. The general context not only expends the space of potential recommendation solutions but also improve predictive accuracy.

The strong point of contextual pre-filtering and post-filtering approaches is the ability to take advantage of legacy recommendation algorithms not taking account into contextual factors.

The essence of contextual modeling is to incorporate directly contextual information into predictive function R3 and so, R3 is constructed as inference model such as data mining, machine learning, heuristic model or statistical model, etc. The 2D predictive function isn’t used in contextual modeling. It implies that the strong

point of this method is pure and powerful inference mechanism. It opens a new trend in context-aware recommendation research, giving a lot of prospects although a few related techniques are extensions of 2D algorithms.

The approach in this paper is the hybrid of contextual post-filtering and contextual modeling. Hence a logistic inference model ( [3] , pp. 372-411) is used as the post filter to make recommendations more relevant to users according to contextual factors. Section 2, which is the main section, describes the proposed approach in detail. The concept of general user pattern (GUP) is firstly introduced and then the collaborative filtering algorithm based on GUP and logistic model is mentioned. The equation to solve logistic model, which is the most important feature of the proposed algorithm, is constructed with support of mathematical tools. An example is given at the end of section 2 for illustrating the proposed approach. Section 3 is the conclusion.

2. Basic Idea and Details of the New Approach

The new approach is suggested by two comments:

Although contextual information is necessary to improve the quality of recommendation process but it cannot replace essential rating information in collaborative filtering research. Inference model taking into account contextual factor should be used as the filter to adjust recommendations returned from predictive ratings so as to give users more appropriate items in concrete circumstances.

When additional contextual information is considered, the speed of recommendation process is decreased. So inference mechanism should be fast in real-time response.

The basic idea is to apply a fast inference model, namely logistic regression function into a list of recommended items so as to achieve a better recommendation result under contextual information. The logistic regression model responses immediately the binary request “whether or not a list of items is relevant to concrete context or preferred by users”. Because there are various items and each item is associated with an individual regression function, the domain of regression function becomes huge, which decreases the speed of algorithm. In order to solve this problem, the items space is reduced to “general user pattern”.

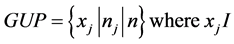

General user pattern (GUP) is known as a set of items to which “many” users give ratings. Since rating values on GUP are not always high, it reflects solely user’s access or rating frequency. Given the threshold θ, let n and nj be the number of total users and the number of users who rate on item xj, we have:

So GUP is defined as a set of items where the ratio of the number of total users to the number of users who rate on such each item is great than or equal to a given threshold θ.

Given a GUP, context c and a logistic regression function f, the regressive (or independent) variables of f are taken from GUP. The response of f is binary variable taking values between 0 and 1 where value 1 indicates that it is likely that user prefers GUP under context c and otherwise. Suppose GUP = {x1, x2, ∙∙∙, xn}, logistic regression function f (x1, x2, ∙∙∙, xn) can be considered to be dependent on GUP.

Given the raw recommended list R, an instance of GUP is initialized on R. This instance denoted INS is a set of predictive rating values taken from R with condition that respective items co-exist in both R and GUP. For example, if R = {x1 = 5, x2 = 3, x3 = 4} and GUP = {x1, x3} then INS = {x1 = 5, x3 = 4} because item x1 and item x3 exist in both R and GUP and their respective values are 5 and 4.

Consequently, regression model f is evaluated on INS with regard to context . If the outcome of f with INS is near to 0 then all items in GUP are removed from R. Finally, the list R is recommended to user after it was fine-tuned by pruning irrelevant items from it.

. If the outcome of f with INS is near to 0 then all items in GUP are removed from R. Finally, the list R is recommended to user after it was fine-tuned by pruning irrelevant items from it.

In general the algorithm has four steps:

1) A 2D predictive function is applied into rating matrix U × I so as to produce a raw list R of recommended items without existence of contextual factors.

2) GUP is discovered over contextual 3D cross domain U × I × C. Items in GUP are frequent items.

3) Multivariate logistic function f is learned from cross domain U × I × C by statistical technique.

4) Function f is used to remove irrelevant items from the list R. In other words, only aware-context items are kept in R. So the final filtered list is the best result which is recommended to users. This step includes two sub- steps:

a) The instance of GUP so-called INS is constructed by matching GUP with R.

b) Function f is evaluated on INS to perform the removal of redundant items from R.

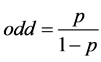

Because step 3 is the most important, the method to construct multivariate logistic function is discussed in detailed now. The probability that GUP = {x1, x2, ∙∙∙, xn} belongs to context  is p; in other words, the probability that user prefers GUP under context c is p. The concept odd ( [3] , p. 11.8) is defined as the ratio of p to 1 − p. This ratio represents how much user likes GUP vice versa how much user dislikes item GUP.

is p; in other words, the probability that user prefers GUP under context c is p. The concept odd ( [3] , p. 11.8) is defined as the ratio of p to 1 − p. This ratio represents how much user likes GUP vice versa how much user dislikes item GUP.

Note that the odd also expresses how likely GUP belongs to context  vice versa how likely GUP does not belongs to context c. The natural logarithm of odd is linear regression function of n variables being GUP.

vice versa how likely GUP does not belongs to context c. The natural logarithm of odd is linear regression function of n variables being GUP.

![]() (1)

(1)

Note that x1, x2, ∙∙∙, and xn are regressive or independent variables whose values are obtained from recommended list R later and coefficients αi(s) are called parameters of logistic model. If odd is considered as response or dependent variable, Equation (1) is re-written as following:

![]() (2)

(2)

where exp (∙) denotes exponent function.

The probability p that user likes items in GUP is computed according to following function derived from Equation (2) with attention that such probability is logistic regression function f (x1, x2, ∙∙∙, xn).

![]() (3)

(3)

Equation (3) represents the multivariate logistic model f with regard to a concrete context c. The approach in this paper uses this equation to estimate whether or not a user prefers a list of recommended items based on a concrete context. For example, given user being “John”, GUP being {“Gladiator”, “Golden Eye”}, recommended movie list being {“Gladiator” with predictive rating 5, “Golden Eye” with predictive rating 4, “Four Rooms” with predictive rating 4} and, if f produces a value greater than or equal to 0.5 when f is evaluated on GUP with regard to time context “evening”, then it asserts that John likes such list and there is no film to be removed. This logistic model is built up in offline mode so as not to affect response time.

The problem needs solved now is to determine parameters αi(s) in logistic function f. We will use method of maximum likelihood estimate (MLE) [4] to construct them. Given training data D is rating cube whose dimensions are user, item and context. Each volume in rating cube is quantified by a value that a user rate on an item in concrete context. If rating cube is projected onto a context c, we get a rating matrix Dc with two dimensions such as user and item. Suppose Dc has m rows and n columns and let yi = (yi1, yi2, ∙∙∙, yin) be the ith row of Dc and it is easy to infer that yij is the ith instance (rating value) of item xj. Suppose rating values yij(s) range from 1 to v where v-most favorite and 1-most dislike and the value 0 indicates that user does not rate on item. Suppose GUP also has n items which are the same to ones in Dc, let ai = (ai1, ai2, ∙∙∙, ain) be a possible instance of such n items. Hence, there are (v+1)n such instances because aij ranges from 0 to v. For example, if n = 2 and v = 2 we have 9 instances (0, 0), (0, 1), (0, 2), (1, 0), (1, 1), (1, 2), (2, 0), (2, 1), (2, 2). Let zi be the number of yi in Dc so that yi = ai and so there are N = (v+1)n such zi(s). Let pi be an instance of logistic function f evaluated on x1 = yi1, x2 = yi2, ∙∙∙, xn = yin, we have:

![]()

It is easy to infer that pi is the probability that the instance ai occurs in Dc. The likelihood function ( [4] , p. 4) of f given training data Dc is:

![]() (4)

(4)

The logarithm likelihood function of f is:

![]() (5)

(5)

The first order partial derivatives of logarithm likelihood function with regard to αk(s) are:

![]()

If there is convention that yi0 = 1, we have:

![]() (6)

(6)

Let ![]() be estimates of α0, α1, ∙∙∙, and αn, respectively. These

be estimates of α0, α1, ∙∙∙, and αn, respectively. These ![]() are maximum points of logarithm likelihood function and hence, we set the first order partial derivatives of logarithm likelihood function with regard to

are maximum points of logarithm likelihood function and hence, we set the first order partial derivatives of logarithm likelihood function with regard to ![]() so as to find out

so as to find out![]() .

.

![]() (7)

(7)

Because Equation (7) is the set of n + 1 non-linear equations with n + 1 variables, it is easy to find out its solution (![]() ) by applying some numerical analysis methods such as Newton-Raphson ( [5] , pp. 67-79) method. Substituting estimates

) by applying some numerical analysis methods such as Newton-Raphson ( [5] , pp. 67-79) method. Substituting estimates ![]() into Equation (3), it is totally to determine the logistic function f.

into Equation (3), it is totally to determine the logistic function f.

For example, suppose there are 2 items, GUP= {x1, x2} receiving binary values such as 0-dislike and 1-like. So, we have n = 2, v = 1 and 4 possible instances y1 = (y11 = 0, y12 = 0), y2 = (y21 = 0, y22 = 1), y3 = (y31 = 1, y32 = 0), y4 = (y41 = 1, y42 = 1). According to Equation (2), instances of logistic function f evaluated on y1, y2, y3, and y4 are:

![]()

Suppose only instance y1 = (y11 = 0, y12 = 0) is observed and so we have z1 = 1, z2 = z3 = z4 = 0. The Equation (7) becomes:

![]()

It is necessary to solve Equation (7) with regard to coefficients α0, α1, and α2. Suppose α0 = 0 and α2 = α1, we have:

![]()

By using software Mathematica [6] , it is easy to find out ![]() and

and ![]() as follows:

as follows:

![]()

where ![]() is imaginary unit.

is imaginary unit.

Finally, probabilities p1, p2, and p3 are determined by substituting complex solutions ![]() and

and ![]() into Equation (2) as follows:

into Equation (2) as follows:

![]()

where ![]() is imaginary unit.

is imaginary unit.

If GUP gets the instance y11 = (x1 = 0, x2 = 0), the logistic probability p1 = f (x1 = 0, x2 = 0) = 0.5 which leads to conclude that user does not like such two items.

3. Conclusions

The approach in this paper is the hybrid of contextual post-filtering and contextual modeling where logistic model is applied in the post stage of recommendation process. The thinking behind this approach is that rating values obtained explicitly by questionnaires or implicitly by inferring users’ behaviors are the most important and contextual factor around users or related to application is additional information which is useful but not essential. Comparing to contextual pre-filtering, this approach restricts the loss of rating information in rating matrix by ignoring data pre-filtering. At the post stage, this approach removes only items which are asserted that users do not like them under contextual condition. Such assertion is the outcome of steady inference model, namely logistic model.

The removal restriction increases recall metric due to reserving solutions space but doesn’t lessen precision metric in comparison of pre-filtering method. Comparing to traditional or post-filtering method, this approach is more accurate because of the stead inference mechanism of logistic function when logistic model is appropriate to binary request such as following yes/no question “whether or not Mary prefers to browse commercial websites in the evening”. Moreover this approach exploits the relationship among items in general user pattern, which is necessary to recommendation process but is not considered in contextual pre-filtering or post-filtering.

Acknowledgements

I express my deep gratitude to Prof. Dr. Ho, Hang T. T., Vinh Long General Hospital, Vietnam Ministry of Health who funded me to complete and publish this research.