On the Performances of Classical VAR and Sims-Zha Bayesian VAR Models in the Presence of Collinearity and Autocorrelated Error Terms ()

Received 3 September 2015; accepted 22 February 2016; published 25 February 2016

1. Introduction

There are various objectives for studying time series. These include the understanding and description of the generated mechanism, the forecasting of future value and optimum control of a system [1] . In time series literature, many authors have found out that multicollinearity and autocorrelation usually afflict time series data. For instance, Gujarati [2] observed that multicollinearity problem usually afflicted the VAR models. It was reported that correlation coefficients  was an appropriate indicator when collinearity began to severely distort model estimation and subsequent prediction [3] . In a recent work of Garba et al. [4] , they observed that the autocorrelation problem usually afflicted time series data. Lastly, Adenomon and Oyejola [5] studied the performances of VAR and BVAR model (assuming harmonic decay) when the bivariate time series were jointly influenced by collinearity and autocorrelation.

was an appropriate indicator when collinearity began to severely distort model estimation and subsequent prediction [3] . In a recent work of Garba et al. [4] , they observed that the autocorrelation problem usually afflicted time series data. Lastly, Adenomon and Oyejola [5] studied the performances of VAR and BVAR model (assuming harmonic decay) when the bivariate time series were jointly influenced by collinearity and autocorrelation.

The aim of this study is to examine the performances of the classical VAR and Sims-Zha Bayesian VAR model in the presence of collinearity and autocorrelated error terms.

2. Model Description

2.1. Vector Autoregression (VAR) Model

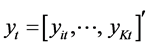

Given a set of k time series variables,  , VAR models of the form

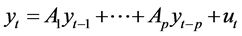

, VAR models of the form

(1)

(1)

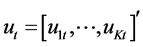

provide a fairly general framework for the Data General Process (DGP) of the series. More precisely this model is called a VAR process of order p or VAR(p) process. Here  is a zero mean independent white noise process with non singular time invariant covariance matrix ∑u and the Ai are (k × k) coefficient matrices. The process is easy to use for forecasting purpose though it is not easy to determine the exact relations between the variables represented by the VAR model in Equation (1) above [6] .

is a zero mean independent white noise process with non singular time invariant covariance matrix ∑u and the Ai are (k × k) coefficient matrices. The process is easy to use for forecasting purpose though it is not easy to determine the exact relations between the variables represented by the VAR model in Equation (1) above [6] .

Also, polynomial trends or seasonal dummies can be included in the model.

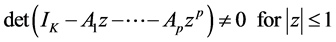

The process is stable if

(2)

(2)

In that case it generates stationary time series with time invariant means and variance covariance structure. The basic assumptions and properties of a VAR processes is the stability condition. A VAR(p) processes is said to be stable or fulfills stability condition, if all its eigenvalues have modulus less than 1 [7] .

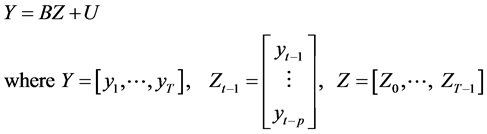

Therefore To estimate the VAR model, one can write a VAR(p) with a concise matrix notation as:

(3)

(3)

Then the Multivariate Least Squares (MLS) for B yields

(4)

(4)

2.2. Bayesian Vector Autoregression with Sims-Zha Prior

In recent times, the BVAR model of Sims and Zha [8] has gained popularity both in economic time series and political analysis. The Sims-Zha BVAR allows for a more general specification and can produce a tractable multivariate normal posterior distribution. Again, the Sims-Zha BVAR estimates the parameters for the full system in a multivariate regression [9] .

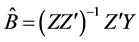

Given the reduced form model

The matrix representation of the reduced form is given as:

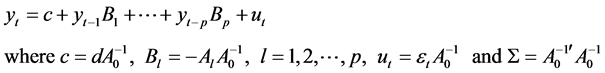

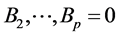

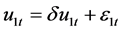

We can then construct a reduced form Bayesian SUR with the Sims-Zha prior as follows. The prior means for the reduced form coefficients are that B1 = I and . We assume that the prior has a conditional structure that is multivariate Normal-inverse Wishart distribution for the parameters in the model. To estimate the coefficients for the system of the reduced form model with the following estimators

. We assume that the prior has a conditional structure that is multivariate Normal-inverse Wishart distribution for the parameters in the model. To estimate the coefficients for the system of the reduced form model with the following estimators

This representation translates the prior proposed by Sims and Zha form from the structural model to the reduced form ([10] [9] ), and ([8] [11] ).

The summary of the Sims-Zha prior is given in Table 1.

3. Simulation Procedure

The simulation procedure is as follows:

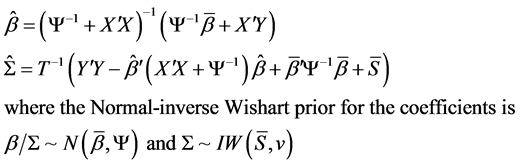

Step 1: We generated an artificial two-dimensional (Bivariate data) VAR (2) process that obeys the following form:

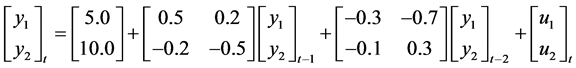

such that  and

and  where

where  and

and![]() . Our choice here is similar to the work and illustration of Cowpertwait, [12] . We considered ten autocorrelated levels as

. Our choice here is similar to the work and illustration of Cowpertwait, [12] . We considered ten autocorrelated levels as ![]() . Our choice for this form model is to obtain a stable process and a VAR process with a true lag length [13] .

. Our choice for this form model is to obtain a stable process and a VAR process with a true lag length [13] .

Step 2: We then use the Cholesky Decomposition to apply to the data generated in Step 1 in order to create a bivariate time series data so that y1 and y2 have the desired correlation level [14] . We considered ten multicollinearity levels as![]() .

.

The combination of Step 1 and 2 therefore produce a bivariate time series such that y1 and y2 are jointly influenced by multicollinearity and autocorrelation.

The simulated data assumed time series lengths of 8, 16, 32, 64, 128 and 256. A sample of simulated data is presented in Table 2 below.

3.1. Model Specification

The time series were generated data using a VAR model with lag 2. The choice here is to obtain a bivariate time series with the true lag length. While the VAR and BVAR models of lag length of 2 was used for modeling and forecasting purpose.

![]()

Table 1. Hyperparameters of Sims-Zha reference prior.

Source: Brandt and Freeman, [10] .

![]()

Table 2. Sample of simulated data for T = 8.

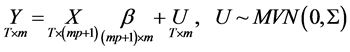

For the BVAR model with Sims-Zha prior, we considered the following range of values for the hyperparameters given below and the Normal-inverse Wishart prior was employed.

We considered two tight priors and two loose priors as follows:

![]()

where nµ is prior degrees of freedom given as m + 1 where m is the number of variables in the multiple time series data. In work nµ is 3 (that is two (2) time series variables plus 1 (one)).

Our choice of Normal-inverse Wishart prior for the BVAR models follow the work of Kadiyala & Karlsson, [15] that Normal Wishart prior tends to performed better when compared to other priors. In addition Sims and Zha, [8] proposed Normal-inverse Wishart prior because of its suitability for large systems while Breheny, [16] reported that the most advantage of Wishart distribution is that it guaranteed to produce positive definite draws. Our choice of the overall tightness ![]() is in line with work of Brandt, Colaresi and Freeman, [17] . In this work we assumed that the bivariate time series follows a quadratic decay. The Quadratic Decay (QD) model has many attractive theoretical properties that is why it is been applied to many fields of endeavour ([18] [19] ) [20] .

is in line with work of Brandt, Colaresi and Freeman, [17] . In this work we assumed that the bivariate time series follows a quadratic decay. The Quadratic Decay (QD) model has many attractive theoretical properties that is why it is been applied to many fields of endeavour ([18] [19] ) [20] .

The following are the criteria for Forecast assessments used:

1) Mean Absolute Error (MAE) has a formular![]() . This criterion measures deviation from the

. This criterion measures deviation from the

series in absolute terms, and measures how much the forecast is biased. This measure is one of the most common ones used for analyzing the quality of different forecasts.

2) The Root Mean Square Error (RMSE) is given as ![]() where yi is the time series data and yf is the forecast value of y [13] .

where yi is the time series data and yf is the forecast value of y [13] .

For the two measures above, the smaller the value, the better the fit of the model [21] .

In this simulation study, ![]() where N = 10,000. Therefore, the model with the minimum RMSE and MAE result as the preferred model.

where N = 10,000. Therefore, the model with the minimum RMSE and MAE result as the preferred model.

3.2. Statistical Packages (R)

In this study three procedures in the R package will be used. They are: Dynamic System Estimation (DSE) [22] ; the vars [23] , and the MSBVAR [24] .

4. Results and Discussion

The Root Mean Square Error (RMSE) and Mean Absolute Error (MAE) were obtained for the various models in the work and are presented in Appendix A, while the ranks are presented in Appendix B. But here the preferred models with respect to their rank are presented. The preferred model for the time series length of 8, 16, 32, 64, 128 and 256 are presented in Tables 3-8 respectively.

In Table 3 below, when T = 8 the BVAR models are preferred in all levels of collinearity and autocorrelation. Also in Table 4 below, when T = 16 the BVAR models are preferred in all levels of collinearity and autocorrelation.

In Table 5 below, when T = 32 the BVAR models are preferred in all levels of collinearity and autocorrelation except in few cases where classical VAR is preferred. In Table 6 below, when T = 64 the BVAR models are preferred in all levels of collinearity and autocorrelation except in some cases where classical VAR is preferred.

In Table 7 below, when T = 128 the BVAR models are preferred in some levels of collinearity and autocorre-

![]()

![]()

Table 3. Preferred model at different levels of collinearity and autocorrelation when T = 8.

lation and the classical VAR is preferred in some levels of collinearity and autocorrelation. In Table 8 below, when T = 256 the BVAR models are preferred in some levels of collinearity and autocorrelation and the classical VAR is preferred in some levels of collinearity and autocorrelation.

![]()

![]()

Table 4. Preferred model at different levels of collinearity and autocorrelation when T = 16.

![]()

![]()

Table 5. Preferred model at different levels of collinearity and autocorrelation when T = 32.

![]()

![]()

Table 6. Preferred model at different levels of collinearity and autocorrelation when T = 64.

![]()

![]()

Table 7. Preferred model at different levels of collinearity and autocorrelation when T = 128.

![]()

![]()

Table 8. Preferred model at different levels of collinearity and autocorrelation when T = 256.

![]()

Table 9. Performance ratings of the classical VAR and Sims-Zha Bayesian VAR.

In Table 9 above, the ratings of the model are compared. For short term time series the BVAR4 model dominate except when T = 8 using the MAE criterion, the BVAR1 model dominate. In the medium term and long term, the BVAR4 model dominated using both criteria. This result revealed that the BVAR4 model is a viable model for forecasting.

5. Conclusion and Recommendation

This work examines the performances of classical VAR and Sims-Zha Bayesian VAR in the presence of collinearity and autocorrelation. The results from 10,000 simulations reveal that the models performance varies with the collinearity and autocorrelation levels, and with the time series lengths. In addition, the results reveal that the BVAR4 model is a viable model for forecasting. Therefore, we recommend that the levels of collinearity and autocorrelation, and the time series length should be considered in using an appropriate model for forecasting.

Acknowledgements

We wish to thank TETFUND Abuja-Nigeria for sponsoring this research work. Our appreciation also goes to the Rector and the Directorate of Research, Conference and Publication of the Federal Polytechnic Bida for giving us this opportunity to undergo this research work.

Appendix A

![]()

![]()

Table A1. The RMSE and MAE values of the model for T = 8.

![]()

![]()

Table A2. The RMSE and MAE values of the model for T = 16.

![]()

![]()

Table A3. The RMSE and MAE values of the model for T = 32.

![]()

![]()

Table A4. The RMSE and MAE values of the model for T = 64.

![]()

![]()

Table A5. The RMSE and MAE values of the model for T = 128.

![]()

![]()

Table A6. The RMSE and MAE values of the model for T = 256.

Appendix B

![]()

![]()

Table B1. The ranks of the performances of the model for T = 8.

NOTES

![]()

*Corresponding author.